More New Thoughts About The Universe

Tuesday 24th March 2015

Kavli Institute for Cosmology Cambridge, UK

Supported by a mini-grant from the Foundational Questions Institute.

Participants:

- Mustapha Amin

- Feraz Azhar

- Daniel Baumann

- Jonathan Braden

- Anthony Challinor

- Jens Chluba (AM only)

- George Efstathiou

- Steven Gratton

- Antony Lewis

- Paul McFadden

- Daniel Mortlock

- Hiranya Peiris

- Andrew Pontzen

- Eva Silverstein

- Anze Slosar

Programme:

Tuesday 24th March

- 9:30am-10:15am: Arrive: Tea/Coffee, Doughnuts and Cookies outside the room

- 10:15am: Start

- 10:15-11:15am: Inflation

- Inflation from the top down (DB)

- 11:15-11:30am: Tea/Coffee outside the room

- 11:30am-1:15pm: Eternal Inflation and Measures

- Stochastic Eternal Inflation (SG)

- Infinities in Cosmology (GPE)

- 1:30-2:45pm: Lunch at The Plough, Coton

- 3pm-4:15pm: Statistical Mechanics and Entropy

- Describing ensembles (AP)

- Entropy in preheating (JB)

- 4:15-4:30pm: Tea/Coffee outside the room

- 4:30-6:45pm: Inference, other and more inflation

- Inference and Models (DM)

- BBN (AS)

- Negative temperatures and phantom models (AL)

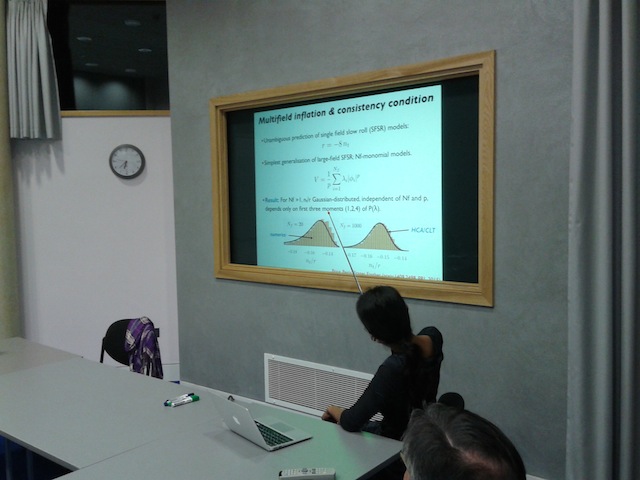

- Predictions for classes of Inflation models (HP)

- 7pm: Dinner at Restaurant 22

Potential Topics and Preparatory Material:

Decoherence in quantum field theory without an external environment (JB)

Starting from a pure state, how does a nonequilibrium quantum field theory generate entropy and decohere into an effectively classical statistical ensemble? This has cosmological applications to the initial density perturbations, (p)reheating, and bubble nucleation in first order phase transitions. If there are no additional environmental degrees of freedom that the field couples to, then the standard mechanism of environment-induced decoherence cannot be used. Instead, the field must decohere itself in some way. One obvious way for this to occur is that high-order correlation functions may serve as an environment for the low-order correlators. This also provides a natural coarse-graining of the fields and thus creates an effective non-pure state with non-zero entropy. However, if coherent field configurations (such as topological defects, bubbles, oscillons, etc.) emerge as part of the dynamics, then it is clear that additional some additional information about correlations between different Fourier modes must be included. As well, in the strongly nonlinear regime, the field theory may be more easily described in terms of some collective variables instead of the fundamental scalars. This leaves open interesting questions as to what the correct collective variables are, which correlations should be used, how these are dynamically selected during the time-evolution, and what is the time-scale for the effective decoherence of the system.

Readings:

Inflation from the Top Down (DB)

Two challenges:

- Extra Fields

- Extra Scales

See Sec. 3 of a recent conference talk.

Conceptual Problems of Inflation (DB)

Ijjas, Steinhardt and Loeb vs Guth, Kaiser and Nomura, and see Sec. 4 of the above-mentioned talk.

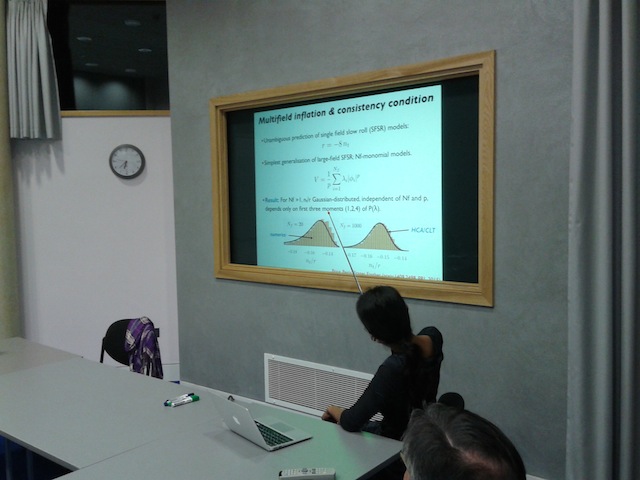

Inflation scenarios (HP)

Potential New Methodologies for studying many field inflation scenarios motivated by fundamental physics...

Further reading:

- Easther, Frazer, Peiris, Price, Phys. Rev. Lett. 112, 161302 (2014) pdf here

- Price, Peiris, Frazer, Easther, Phys. Rev. Lett. 114, 031301 (2015) pdf here

- Price, Frazer, Xu, Peiris, Easther, JCAP, 03, 005 (2015) pdf here

Negative temperatures - can they be realised in the Universe? (AL)

Negative temperature imply negative pressures, but are only possible in

particular constrained quantum systems and may be unstable. Is there any way

to get and maintain such a quantum system for dark energy or inflation (or

re-interpret existing models in terms of negative temperatures?)

Readings:

What is the ensemble? (AP)

When we make arguments in statistical mechanics, we typically maximize the entropy of some distribution of particles subject to constraints. We can maximize, for instance, the entropy of the distribution of particles in different buckets i subject to fixed mean energy and particle number. However the actual number of particles in a given phase space volume ni is a physically real thing, and so from the Jaynes perspective this seems like the wrong sort of argument. We seem to be slightly confusing our subjective probabilities for the distribution vs the actual frequency of particles in different states within the real system.

We ought to be maximizing our uncertainty about the situation, which would amount to maximizing the uncertainty in the probability distribution function p(n), not in the occupation numbers themselves.

This point was made in a recent paper by Carron & Szapudi, in which they claimed that one ends up with a different version of statistical mechanics by "fixing" the problem. However I have some calculations which show how to recover normal statistical mechanics from the extended version. So, beyond showing where the Carron & Szapudi made the wrong assumptions, is this approach useful for anything? One can, for example, calculate typical fluctuations around the equilibrium with apparently fewer ad hoc assumptions than normally made. But are there more fundamental issues lurking here?

The Flipped Universe (AP)

If we make an initial realization of a gaussian random field for the density fluctuations, delta(x), its mirror image delta(x) -> -delta(x) has just the same 2-point properties of course. So one can evolve both the original and mirror universe in a nonlinear N-body code and see what happens. Voids map onto halos and vice versa. Also, one should be able to pull out some insight into non-linear evolution by looking at the cross-spectra of the vanilla and mirror universes. How useful could this technique be in practice?

Hostless Gamma Ray Bursts (DM)

A significant minority of high-redshift GRBs don't seem to reside in a host galaxy, even with deep HST imaging of the field. This isn't unreasonable, since stellar-mass objects are known to be ejected from galaxies (cf. recent observations of hyper-velocity stars in the Milky Way), but the numbers are perhaps surprising. The question is whether the dominant explanation for no-host GRBs is that they are: in host galaxies that are too distant/faint to be seen; ejected from one of the observed galaxies along nearby lines-of-sight; or ejected from galaxies much further away, and so essentially untraceable. A good starting reference is the catchily-titled 'A short gamma-ray burst "no-host" problem?

Investigating large progenitor offsets for short GRBs with optical afterglows',

Berger, E., 2010, ApJ, 722, 1946

(arXiv:1007.0003)

Bayesian Model Comparison for Cosmology (DM)

How should one extend model comparison to situations without compelling

parameter priors? See Bayesian Model Comparison in Cosmology.

Stochastic Eternal Inflation in Different Gauges (SG)

A few years ago I (Steven) managed to introduce a path integral for

stochastic inflation for lambda-phi-4 that treated both stochastic jumps and volume-weighting on an equal footing (see an updated version

of the arxiv preprint here). I've since extended the work to apply

to more general potentials and to give results in different gauges. I'd like to hear what people think about this and indeed other approaches to predictions in eternal inflation.

Practical Details

The mini-grant will cover food, non-alcoholic drinks and hopefully

any reasonable travel expenses those coming from outside of Cambridge

may incur!